As a Strategic Advisor, Mark will be supporting our work at the intersection of energy systems, data center infrastructure, and...

“It was the general consensus of the signatories and roundtable participants that a next generation of AI is underway that is based on scientific principles and findings. This approach does not require monolithic models nor massive processing, but is capable of adaptive learning with far less computing resources than current LLMs.” — John Henry Clippinger, Bioform Labs

This next generation of AI is indeed underway. In fact, core standards for its framework have been in development with the IEEE for over three years. This method of AI is operational and its development is being led by VERSES AI, who after several years of private pilots and international programs, is currently in Beta for their Genius™ platform with partners like NASA, Volvo, and others, with plans to make it accessible to the public in 2024.

This group of leaders came together to to discuss a letter signed by 25 neuroscientists, biologists, physicists, policy makers, AI researchers, entrepreneurs, and directors of research labs on a joint initiative proposing a radical rethinking of AI’s trajectory, advocating for a model of AI that is fundamentally different from the data-intensive, computationally expensive systems that dominate today’s landscape.

Their meeting signifies growing momentum behind a new approach to AI called Active Inference, developed by world renowned neuroscientist, Dr. Karl Friston, Chief Science Officer at VERSES AI, that leads to more transparent, ethical and beneficial intelligent systems.

https://bostonglobalforum.org/news/letter-on-a-natural-ai-based-on-the-science-of-computational-physics-biology-and-neuroscience-policy-and-societal-significance/

This joint initiative, “Natural AI Based on The Science of Computational Physics and Neuroscience: Policy and Societal Significance” proposes a new public narrative and research agenda that reimagines AI as an extension of the very principles that govern life itself — one grounded in the physics and biology of life. (The Letter in its entirety is included at the end of this article.)

The Letter – Image by Author

This Letter presented by the global leaders underscoring this initiative questions the current reliance on Large Language Models (LLMs) and Transformer models, which, while impressive, are based more on engineering ingenuity than on an understanding of life’s underlying processes. It suggests that a system modeled after biological processes would be more efficient, requiring less computational power and offering greater adaptability. This approach would represent a shift from AI as a tool to replicate human tasks, to AI as an organism that learns and evolves according to biological and physical principles.

Their concise yet compelling message challenges widespread views and outlines an alternative vision for technology more aligned with scientific realities.

The recent explosion in large neural networks like Large Language Models (LLMs) and Transformer Models, while demonstrating remarkable advances in language processing and content generation, has also raised valid concerns. Their sheer scale and computational complexity makes them opaque “black boxes” that are poorly understood, hard to control, and have exhibited harmful biases, along with a slew of other issues.

Seeking to forge a new public narrative and research agenda around a Natural AI, grounded in the science of computational physics and neuroscience, with profound implications for policy and societal welfare, this letter warns against the perpetuation of these “corpus bound” models without a solid scientific foundation or performance standards, noting their potential to pose “existential” threats to humanity if left unchecked.

Meanwhile, the meteoric progress of systems like ChatGPT has fueled speculative claims about “Artificial General Intelligence” and existential threats. Yet there is little scientific evidence that these current AI systems bear any meaningful resemblance to human or biological intelligence. As this letter suggests, more pressing risks come from the overhype and misunderstanding surrounding them.

The signatories of the roundtable letter argue that a more credible and scientific path exists towards safer and more trustworthy AI systems inspired by the processes of life itself. This path is VERSES AI’s path — Active Inference.

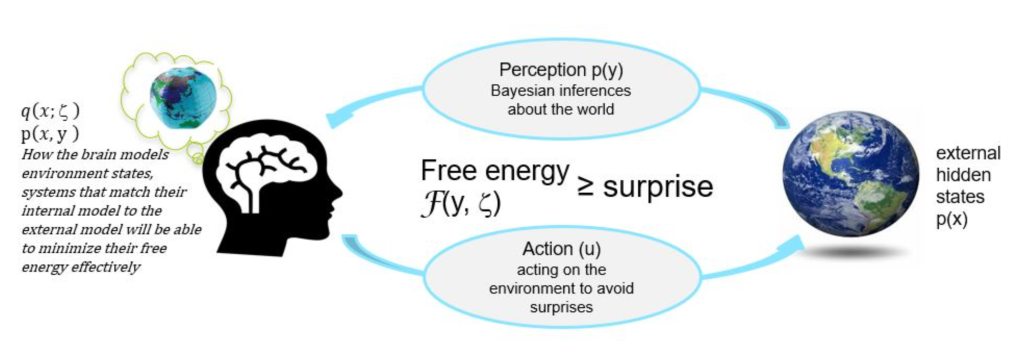

Image by permission from VERSES AI

At its core is the idea of the Free Energy Principle. discovered by Dr. Karl Friston, Chief Scientist at VERSES AI, which demonstrates that all living things act to preserve their identity by minimizing surprises in a changing environment. This drive translates mathematically into the imperative to minimize “variational free energy”. Models can then self-organize perception and action in line with their inherent goals and knowledge of the world.

https://www.kaggle.com/code/charel/learn-by-example-active-inference-in-the-brain-2/notebook

Dr Friston, who has been developing this methodology over many years, joined VERSES in order to commercialize the process so that it can be made available for the world to use.

Active Inference AI draws on advances in computational neuroscience, biology and statistical physics to create intelligent systems displaying key attributes of natural life, including autonomy, adaptability, and goal-directedness.

Active Inference enables a distributed network of shared intelligence containing a multitude of intelligent agents in cooperation with each other and humans, all perceiving, learning, and acting on the world in real time. Active Inference Agents are explainable, programmable, and auditable. This is the AI that enables safe and trustworthy autonomous intelligent systems. AIS that can run mission critical operations.

Unlike today’s giant yet brittle neural networks, Active Inference systems continue operating with transparency on the edge rather than needing continuous connectivity to distant mega-data centers. This makes them amenable to inspection, alignment and control.

Wider awareness of the scientific feasibility of safer, decentralized approaches to AI could positively reshape policy discussions in this area. Rather than resignation towards uncontrollable technological forces, regulators can proceed with grounded optimism around information and communication technologies for social good.

During the summer of 2023, VERSES AI published a groundbreaking AI governance report in partnership with Dentons Law Firm and the Spatial Web Foundation. This report, titled, “The Future of Global AI Governance,” dives deep into the unique attributes of Active Inference AI that position it as a potential leader for establishing a governance framework around autonomous systems; A framework that is adaptable to variances in socio-cultural differences and can scale providing flexibility for different levels of AI developmental abilities, also considering and adjusting to the considerations and comfortability in governance requirements for various autonomous advancements bounded within human and machine relationships.

Image by permission from VERSES AI

Transitioning towards distributed multi-scale, trustworthy AI need not depend on the agenda or resources of “Big Tech” incumbents. Alternative ecosystems of innovation can flourish around creating an “AI for the people and the planet”.

The letter presented to this roundtable argues for an approach to AI that mirrors the adaptability and efficiency found in natural neural systems. It highlights that the current AI models are not only resource-intensive but also fall short in self-explanation, self-reflection, and self-correction — traits that are inherent in biological organisms. By incorporating these natural traits, AI could achieve a level of autonomy and efficiency that has been previously unattainable.

Moreover, the application of the Free Energy Principle from neuroscience, which presumes that all living systems aim to minimize free energy to maintain homeostasis, could be the scientific bedrock for developing such AI systems. This principle could lead to the creation of AI that is not only energy-efficient but also self-organizing, capable of adapting to new environments and challenges in a manner that is similar to living organisms. By prioritizing these biological and physical principles, the development of AI would become more sustainable, accessible, and decentralized, breaking the current monopoly of ‘Big Tech’ companies over advanced AI systems.

The vision here extends beyond the technical aspects of AI, delving into its societal implications. The letter suggests that the integration of AI with human intelligence should be done in a manner that is beneficial and harmonious, rather than competitive or antagonistic. The envisioned AI works alongside human intelligence, enhancing our cognitive capabilities and addressing societal challenges in areas such as healthcare, education, and environmental preservation.

Furthermore, the call for actions at the end of the letter is a plea for a multidisciplinary approach to this new form of AI. It emphasizes the need for workshops and discussions among various stakeholders, including legislators, technologists, scientists, and the public, to ensure that the development of AI is aligned with ethical standards and societal needs. This collaborative approach is crucial to creating AI technologies that are not only advanced but also equitable and inclusive.

Coincidentally, VERSES AI, Dentons, and the Spatial Web Foundation proposed a global regulatory sandbox called, The Prometheus Project, based on core-technical standards. This public/private initiative offers a collaborative landscape that can be used for testing and aligning emerging AI technologies with suitable regulatory frameworks, using advanced AI and simulation technology, creating an environment where we can plan for the future and test socio-technical standards before their widespread adoption.

This sandbox enables governments, technology developers, and researchers to iteratively deploy, evaluate, and discuss new technology and standards’ impact, providing valuable insights into how emerging technologies can comply with existing regulations and how new regulatory frameworks might need to be adjusted. It also provides an environment for stakeholders to experiment, gain insights, and adapt regulations, thereby reducing barriers to entry and promoting innovation. This initiative, leveraging expertise and resources from both public and private sectors, aims to provide an efficient regulatory framework for increasingly autonomous AI systems, positioning AI as an opportunity rather than a threat.

https://bostonglobalforum.org/news/letter-on-a-natural-ai-based-on-the-science-of-computational-physics-biology-and-neuroscience-policy-and-societal-significance/

This roundtable discussion between these leaders on December 12, 2023, highlighted the broad consensus on the potential prosocial benefits of such an approach, emphasizing the need for new policy and regulatory frameworks to guide its development. Participants agreed that while the new direction for AI promises significant advantages, it necessitates close collaboration between scientists, policymakers, technologists, and society at large to ensure its ethical and beneficial implementation.

The combined efforts of the Active Inference Institute, the Neuropsychiatry and Society Program, and the Boston Global Forum in prompting this roundtable and the accompanying letter, represent a pivotal moment for AI. It’s a call to reevaluate our current path and to steer towards a future where AI is developed, not as an isolated technological pursuit, but as an integral part of the broader ecosystem of intelligent life.

The proposals presented reflect a deep understanding of both the potential and the pitfalls of current AI models, and they offer a vision for AI that is self-explanatory, self-reflective, and self-corrective, and not constrained by the limitations of big tech companies due to high computational costs. The letter and roundtable advocates for artificial intelligence that is distributed, energy-efficient, and capable of running on mobile devices, promoting privacy, security, and democratic use, offering an AI future that is energy-efficient, self-sustaining, and, most importantly, in harmony with the principles that govern all life — all qualities and benefits of Active Inference AI.

The significance of this meeting, and the letter that preceded it, lies in the potential to reshape the entire landscape of AI development and policy, moving us towards a future where ‘natural’ rather than ‘artificial’ AI supports and enhances the best qualities of human and natural intelligence. By embracing AI grounded in scientific realities rather than science fiction tropes, society can overcome fear-mongering and advance together towards technology for the greater good.

We can look forward to a future where AI works in partnership and cooperation with humans rather than replacing or threatening us. But we need to unite now beyond narrow interests to make this positive and beneficial path a reality for global good.

A NATURAL AI BASED ON THE SCIENCE OF COMPUTATIONAL PHYSICS, BIOLOGY AND NEUROSCIENCE: POLICY AND SOCIETAL SIGNIFICANCE

December 12, 2023

Introduction:

The astonishing achievements of Large Language Models (LLMs) and Transformer models have exceeded the expectations of even their most ardent supporters. Foundational advances were made in the discovery of the power of Markov processes, tensor networks, transformers, and context-aware attention mechanisms. These advances were guided, not by specific scientific hypotheses, but by sheer engineering ingenuity in the application of mathematical and novel machine learning techniques. Such approaches have relied upon massive computational capabilities to generate and fit billions of parameters into models and outputs that achieve outcomes tied to the expectations of their respective creators. Notwithstanding the massive advances in system performance, potential use cases, and adoption of such systems, no scientific principles nor independent performance standards were referenced or applied to direct the research and development, nor to evaluate the adequacy of their outputs or contextual appropriateness of their performance. Consequently, all current LLMs and Transformer models are “corpus bound,” and their parameter-setting criteria are confined to an inaccessible and undecipherable stochastic “black box”.

The rapid advance and notable successes of LLM and Transformer models in processing information is historically unprecedented and has led to proclamations, by some reputable individuals, of “existential” threats to human civilization and emergent “super intelligence” or “Artificial General Intelligence”. No doubt the potential for intentional abuse (and negligent application) of such powerful and novel technologies is enormous, and likely to dwarf those harms arising in social media contexts. However, suppositions as to what constitutes “intelligence”, much less a “super” or “AGI” are ill founded and foster highly misleading public narratives of the future of intelligent systems generally. This de facto narrative is rooted in popular tropes characteristic of apocalyptic science fiction, but are not supported by scientific evidence. Contrary to the popular narrative, a substantial and credible body of scientific research exists today, grounded in computational neuroscience, biology, and physics, that supports a much more nuanced, and ultimately positive and tractable narrative relating to the phenomenon of intelligences. This perspective is one that integrates AI, human intelligence, and other intelligent forms into an overall description and understanding about the interconnected “intelligences” of all “living things”. The emerging field of Diverse Intelligence, which is highlighting forms of cognition in unconventional substrates is an essential part of the AI debate, and a necessary balance to misguided comparisons to human minds as the essential rubric for evaluating AI.

This alternative scientific narrative that harmonically couples conceptions of “life” and “intelligence” is the precursor to next generation forms of ultra-high capacity, distributed AI composed of self-explanatory, self-reflective, and self-corrective intelligences.

Without a proper and nuanced scientific understanding of current and future AI technologies, policies and regulations intended to manage AI systems and their impacts are likely to be misdirected and ineffective.

Neural systems arising in nature have evolved to achieve an impressive array of adaptive capabilities. Human societies and the capacity for symbolic communication, have leveraged natural evolution of fitness to the point where human organisms can convey information across time and space, fostering the accumulation of knowledge at an ever-accelerating pace. The human mind and its extensions have advanced to the point where it can create AI.

Why is the human brain-mind relevant to the future of AI? A deep understanding of the structure and functions of the human brain and its emergent mental functions can not only help shape future technological possibilities (with and beyond neural network models), but will also be essential in optimizing how human intelligence integrates and works with artificial intelligence in a pro-social, rather than anti-social, manner. The human brain-mind has evolved in ways that lead to both advantages and limitations. It will be necessary to work synergistically with AI (including, for example, possible brain-computer interfaces in the treatment of diseases), and to guide its ethical development.

Critical misunderstandings are not just scientific and academic but in a broader economic, policy and social structural contexts as well. For example, due to their high computational costs and dependency on large volumes of training data, LLMs and Transformer models are broadly presumed to be only affordable for commercialization by “Big Tech” companies. Hence, the argument is made that Big Tech should be courted and granted special consideration by regulators and deference by the general public. But this need not be the case, as AI technology does not have to be monolithic nor concentrated to be successfully commercialized and appropriately regulated. In the very near future distributed and biologically grounded intelligences will have the capacity to run on mobile devices with far less energy than current systems, and with the intrinsic ability to self enforce and self correct their actions and goals, vastly outperforming current and future centralized AI system architectures. Such transparent cognitive architectures and edge infrastructures upon which such future intelligence infrastructures will run will be critical to preserving privacy and security and in attaining the equitable, sustainable and democratic use of this promising and necessary technology.

Call for actions:

We the undersigned signatories believe that it is vital at this juncture in the commercialization and regulation of AI that an alternative and science-based understanding of the biological foundations of AI be given public voice and that interdisciplinary public workshops be convened among legislators, regulators, technologists, investors, scientists, journalists, NGOs, faith communities, the public and business leaders.

Through the combined efforts of the Active Inference Institute, whose founding principles are grounded in science and the computational physics and biology of living intelligences and open technologies, the Neuropsychiatry and Society Program, whose focus is bringing an understanding of the human brain-mind to societal issues and technological developments, and the Boston Global Forum, whose mandate is the formation of global policies and AI World Society model for the inclusive and beneficial application of AI, there can be real transformative change in the way we approach, develop, and integrate artificial intelligence into our societies.

Signed,

Signatories list:

All content for Spatial Web AI is independently created by me, Denise Holt.

Empower me to continue producing the content you love, as we expand our shared knowledge together. Become part of this movement, and join my Substack Community for early and behind the scenes access to the most cutting edge AI news and information.

As a Strategic Advisor, Mark will be supporting our work at the intersection of energy systems, data center infrastructure, and...

Seed IQ™ executed two concurrent Bell pair structures across three logical qubits, maintaining entanglement and coherence under real hardware noise.

New Video: How Seed IQ™ works and what it is capable of. A walk through of the architecture, principles, and...

A first-hand account of the 12 days leading up to the official launch of ARC-AGI 3.

This article is intended as a canonical source of truth regarding AIX Global Innovations’ partnerships, technology, and architecture.

A different class of energy governance is emerging. Seed IQ™ converts hidden energy waste into measurable savings and amplified energy...

Why the world's leading neuroscientist thinks "deep learning is rubbish," what Gary Marcus is really allergic to, and why Yann...

Seed IQ™ enables coherence across distributed agents through shared operational belief propagation maintaining system-level viability, constraints, and objectives while adapting...

Preliminary results with Seed IQ™ suggest that barren plateaus can be treated as an operational state that is detectable, actionable,...